•1 min read•from InfoQ

Anthropic Introduces Managed Agents to Simplify AI Agent Deployment

Our take

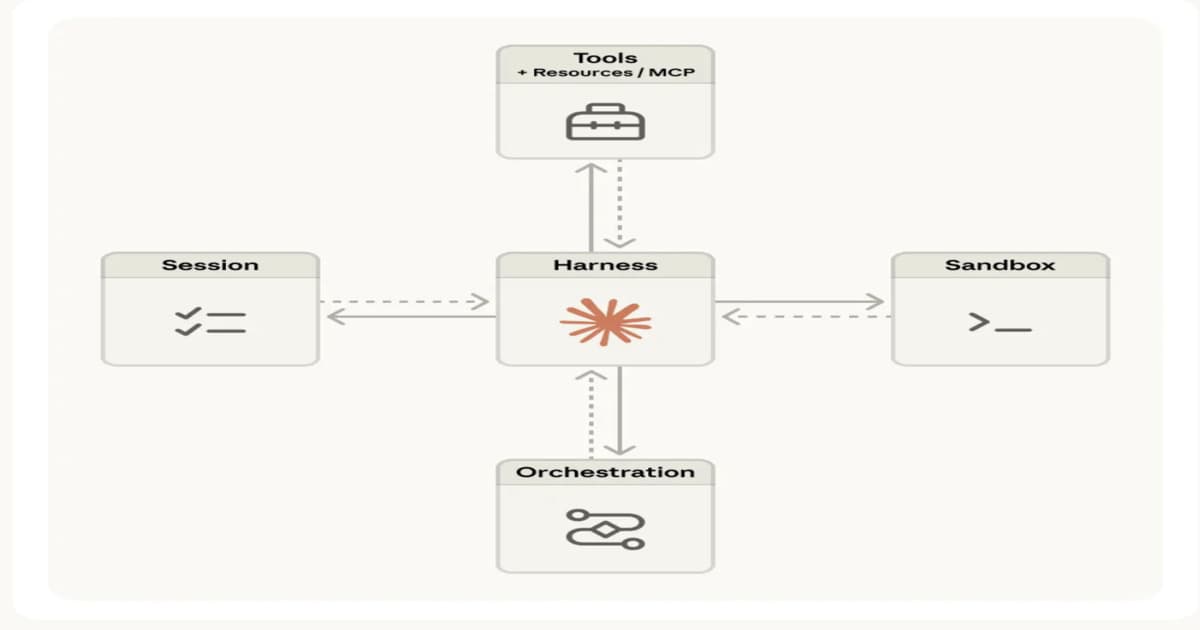

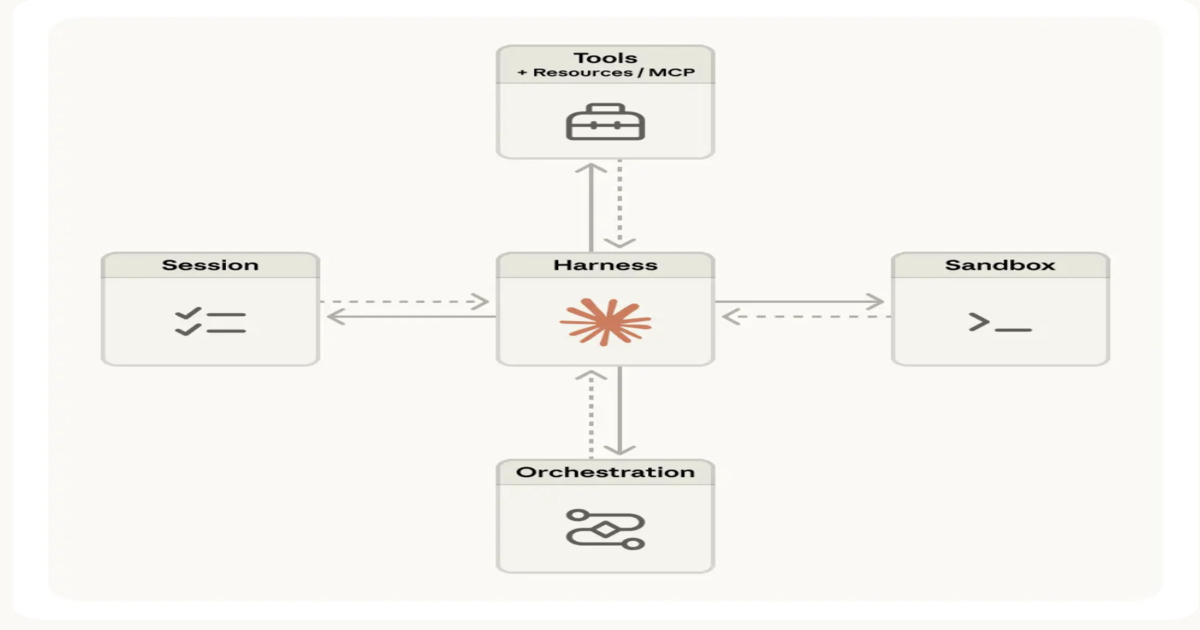

Anthropic has unveiled Managed Agents on Claude, a groundbreaking execution layer designed to simplify the deployment of AI agents. By decoupling agent logic from runtime challenges such as orchestration, sandboxing, and state management, this innovative system enhances the efficiency of agent-based workflows. It supports long-running, multi-step processes that can integrate with external tools while ensuring error recovery and session continuity through a robust meta-harness architecture. This development empowers users to navigate complex tasks with greater ease, paving the way for more productive AI-driven solutions.

Anthropic introduces Managed Agents on Claude, a managed execution layer for agent-based workflows. It separates agent logic from runtime concerns like orchestration, sandboxing, state management, and credentials. The system supports long-running multi-step workflows with external tools, error recovery, and session continuity via a meta-harness architecture.

By Leela KumiliRead on the original site

Open the publisher's page for the full experience

Related Articles

- Anthropic wants to own your agent's memory, evals, and orchestration — and that should make enterprises nervousJust a few weeks after announcing Claude Managed Agents, Anthropic has updated the platform with three new capabilities that collapse infrastructure layers like memory, evaluation, and multi-agent orchestration, into a single runtime. This move could threaten the standalone tools that many enterprises cobble together. The new capabilities — 'Dreaming,' 'Outcomes,' and 'Multi-Agent Orchestration' — aim to make agents inside Claude Managed Agents “more capable at handling complex tasks with minimal steering,” Anthropic said in a press release. Dreaming deals with memory, where agents “reflect” on their many sessions and curate memories so they learns and surface unknown patterns. Outcomes allows teams to define and set specific rubrics to measure an agent's success, while Multi-Agent Orchestration breaks jobs down so a lead agent can delegate to other agents. Claude Managed Agents ideally provides enterprises with a simpler path to deploy agents and embeds orchestration logic in the model layer. It’s an end-to-end platform to manage state, execution graphs, and routing. With the addition of Dreaming, Outcomes and Multi-agent Orchestration, Claude Managed Agents expands capabilities even further and directly competes with tools like LangGraph or CrewAI, as well as external evaluation frameworks, RAG memory architectures, and QA loops. An integration threat Enterprises must now ask: Should we ditch our flexible, modular system in favor of an agent platform that brings almost everything in-house? Anthropic designed Claude Managed Agents to share context, state, and traceability in one place. This means the platform sees every decision agents make, rather than enterprises having to wire separate systems together. It sounds practical to have one platform that does everything. But not all enterprises want a full-service system. Claude Managed Agents already faces criticism that it encourages vendor lock-in because it owns most of the architecture and tools that govern agents. In the current paradigm, an organization may run Managed Agents but keep multi-agent orchestration, memory, or evaluations in a separate space ensures flexibility. The platform offers a fully-hosted runtime, which means memory and orchestration run on infrastructure the enterprise does not own. This can become a compliance nightmare for some organizations that have to prove data residency. Another problem to consider is that enterprises already in the middle of large-scale AI transformations must cobble together workarounds to deal with the constraints of their tech stack. Not every workflow is easily replaceable by switching to Claude Managed Agents. Dreaming and outcomes against current tools Most enterprises have a fragmented approach to AI deployment. For example, they may use LangGraph or Crew AI for agent routing and workflow management, Pinecone as a vector database for long-term memory, DeepEval for external evaluation, and a human-in-the-loop quality assurance to review some tasks. Anthropic hopes to do away with all of that. With Dreaming, Anthropic approaches memory by allowing users to actively rewrite it between sessions, so the agent essentially learns from its mistakes. Anthropic says this capability is useful for long-running states and orchestration. Current systems often handle memory persistence by storing embeddings, retrieving relevant context, and adding more state over time. Outcomes addresses the evaluation portion by detailing expectations for agents. Instead of external quality checks, which are often done by a team of humans, Anthropic is bringing evaluation into the orchestration layer rather than above it. But it’s the Multi-Agent Orchestration capability that pits Claude Managed Agents against orchestration frameworks from Microsoft, LangChain, CrewAI, and others. Model providers like Anthropic and OpenAI have already begun pushing aggressively into this space, arguing that bringing this to the model layer gives teams better control. Big decisions to make Enterprises face a big decision, and this one could depend on where they are in agent maturity. If an organization is still experimenting with agents and has not deployed many in production, they may find moving to Claude Managed Agents and configuring Dreaming and Outcomes to their needs much easier. This is the stage of development where, even if enterprises are using a third-party orchestrator like LangChain, they’re still customizing it. But for those who are already further along in the process, the calculation becomes trickier. It’s now a matter of parallel evaluation and better understanding of their processes. Businesses, though, will face the same decision even if they don’t intend to use Claude Managed Agents. Anthropic has signaled that other model and platform providers will likely shift their product roadmaps to a similar model that keeps everything locked in the same system — because models may become interchangeable, but the tooling and orchestration infrastructure will not.

- Google and AWS split the AI agent stack between control and executionThe era of enterprises stitching together prompt chains and shadow agents is nearing its end as more options for orchestrating complex multi-agent systems emerge. As organizations move AI agents into production, the question remains: "how will we manage them?" Google and Amazon Web Services offer fundamentally different answers, illustrating a split in the AI stack. Google’s approach is to run agentic management on the system layer, while AWS’s harness method sets up in the execution layer. The debate on how to manage and control gained new energy this past month as competing companies released or updated their agent builder platforms—Anthropic with the new Claude Managed Agents and OpenAI with enhancements to the Agents SDK—giving developer teams options for managing agents. AWS with new capabilities added to Bedrock AgentCore is optimizing for velocity—relying on harnesses to bring agents to product faster—while still offering identity and tool management. Meanwhile, Google’s Gemini Enterprise adopts a governance-focused approach using a Kubernetes-style control plane. Each method offers a glimpse into how agents move from short-burst task helpers to longer-running entities within a workflow. Upgrades and umbrellas To understand where each company stands, here’s what’s actually new. Google released a new version of Gemini Enterprise, bringing its enterprise AI agent offerings—Gemini Enterprise Platform and Gemini Enterprise Application—under one umbrella. The company has rebranded Vertex AI as Gemini Enterprise Platform, though it insists that, aside from the name change and new features, it’s still fundamentally the same interface. “We want to provide a platform and a front door for companies to have access to all the AI systems and tools that Google provides,” Maryam Gholami, senior director, product management for Gemini Enterprise, told VentureBeat in an interview. “The way you can think about it is that the Gemini Enterprise Application is built on top of the Gemini Enterprise Agent Platform, and the security and governance tools are all provided for free as part of Gemini Enterprise Application subscription.” On the other hand, AWS added a new managed agent harness to Bedrock Agentcore. The company said in a press release shared with VentureBeat that the harness “replaces upfront build with a config-based starting point powered by Strands Agents, AWS’s open source agent framework.” Users define what the agent does, the model it uses and the tools it calls, and AgentCore does the work to stitch all of that together to run the agent. Agents are now becoming systems The shift toward stateful, long-running autonomous agents has forced a rethink of how AI systems behave. As agents move from short-lived tasks to long-running workflows, a new class of failure is emerging: state drift. As agents continue operating, they accumulate state—memory, too, responses and evolving context. Over time, that state becomes outdated. Data sources change, or tools can return conflicting responses. But the agent becomes more vulnerable to inconsistencies and becomes less truthful. Agent reliability becomes a systems problem, and managing that drift may need more than faster execution; it may require visibility and control. It’s this failure point that platforms like Gemini Enterprise and AgentCore try to prevent. Though this shift is already happening, Gholami admitted that customers will dictate how they want to run and control any long-running agent. “We are going to learn a lot from customers where they would be using long-running agents, where they just assign a task to these autonomous agents to just go ahead and do,” Gholami said. “Of course, there are tricks and balances to get right and the agent may come back and ask for more input.” The new AI stack What’s becoming increasingly clear is that the AI stack is separating into distinct layers, solving different problems. AWS and, to a certain extent, Anthropic and OpenAI, optimize for faster deployment. Claude Managed Agents abstracts much of the backend work for standing up an agent, while the Agents SDK now includes support for sandboxes and a ready-made harness. These approaches aim to lower the barrier to getting agents up and running. Google offers a centralized control panel to manage identity, enforce policies and monitor long-running behaviors. Enterprises likely need both. As some practitioners see it, their businesses have to have a serious conversation on how much risk they are willing to take. “The main takeaway for enterprise technology leaders considering these technologies at the moment may be formulated this way: while the agent harness vs. runtime question is often perceived as build vs. buy, this is primarily a matter of risk management. If you can afford to run your agents through a third-party runtime because they do not affect your revenue streams, that is okay. On the contrary, in the context of more critical processes, the latter option will be the only one to consider from a business perspective,” Rafael Sarim Oezdemir, head of growth at EZContacts, told VentureBeat in an email. Iterating quickly lets teams experiment and discover what agents can do, while centralized control adds a layer of trust. What enterprises need is to ensure they are not locked into systems designed purely for a single way of executing agents.

- Anthropic’s 10 AI Agents are Redefining Finance WorkThe headline may sound extreme here. Of course, Claude is not replacing CFOs tomorrow morning. But with the debut of Claude’s new Financial Services Solution by Anthropic, it has clearly moved to a new direction in the world of finance, one where AI does way more than crunch numbers or explain stuff. Think specific financial […] The post Anthropic’s 10 AI Agents are Redefining Finance Work appeared first on Analytics Vidhya.

- Anthropic’s 10 AI Agents are Redefining Finance WorkThe headline may sound extreme here. Of course, Claude is not replacing CFOs tomorrow morning. But with the debut of Claude’s new Financial Services Solution by Anthropic, it has clearly moved to a new direction in the world of finance, one where AI does way more than crunch numbers or explain stuff. Think specific financial […] The post Anthropic’s 10 AI Agents are Redefining Finance Work appeared first on Analytics Vidhya.

Tagged with

#automation in spreadsheet workflows#natural language processing for spreadsheets#big data management in spreadsheets#self-service analytics tools#generative AI for data analysis#business intelligence tools#collaborative spreadsheet tools#cloud-based spreadsheet applications#Excel alternatives for data analysis#financial modeling with spreadsheets#data visualization tools#enterprise data management#data analysis tools#rows.com#Managed Agents#AI Agent Deployment#agent-based workflows#Claude#execution layer#meta-harness architecture