•1 min read•from InfoQ

Cloudflare Builds High-Performance Infrastructure for Running LLMs

Our take

Cloudflare has unveiled a new infrastructure tailored for running large AI language models across its expansive global network. Recognizing the demands of these models, which require substantial hardware and must efficiently manage high volumes of text traffic, Cloudflare has innovatively separated input processing from output generation. This strategic optimization enhances performance and scalability, positioning Cloudflare as a key player in the evolving landscape of AI technology. This advancement exemplifies Cloudflare's commitment to empowering users with robust, future-focused solutions in the realm of artificial intelligence.

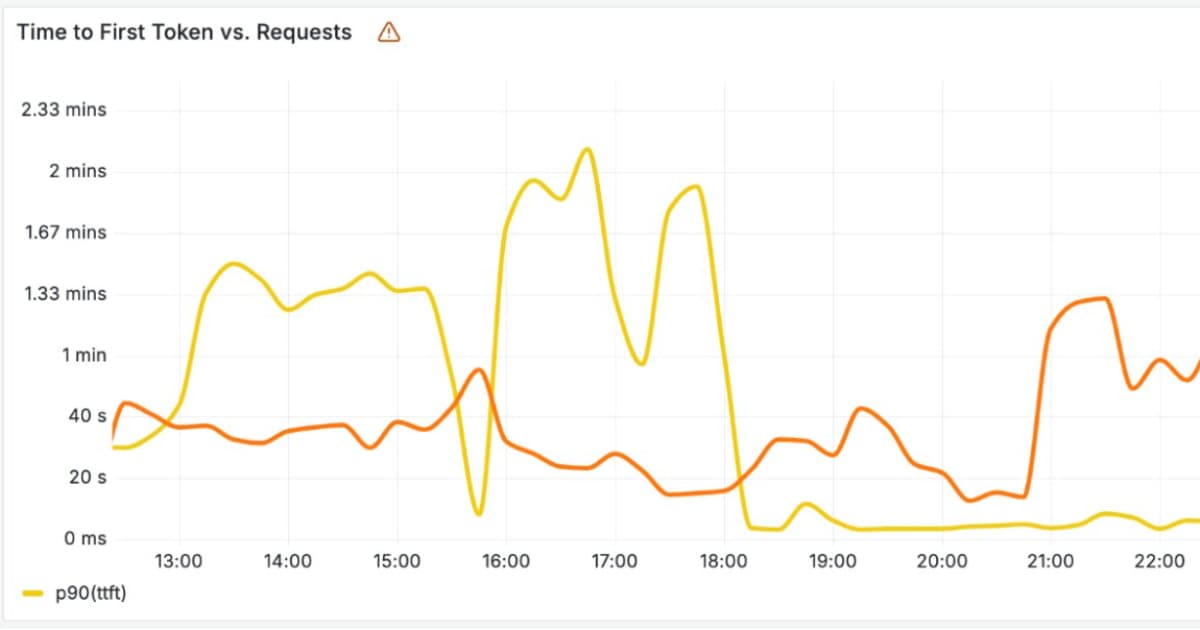

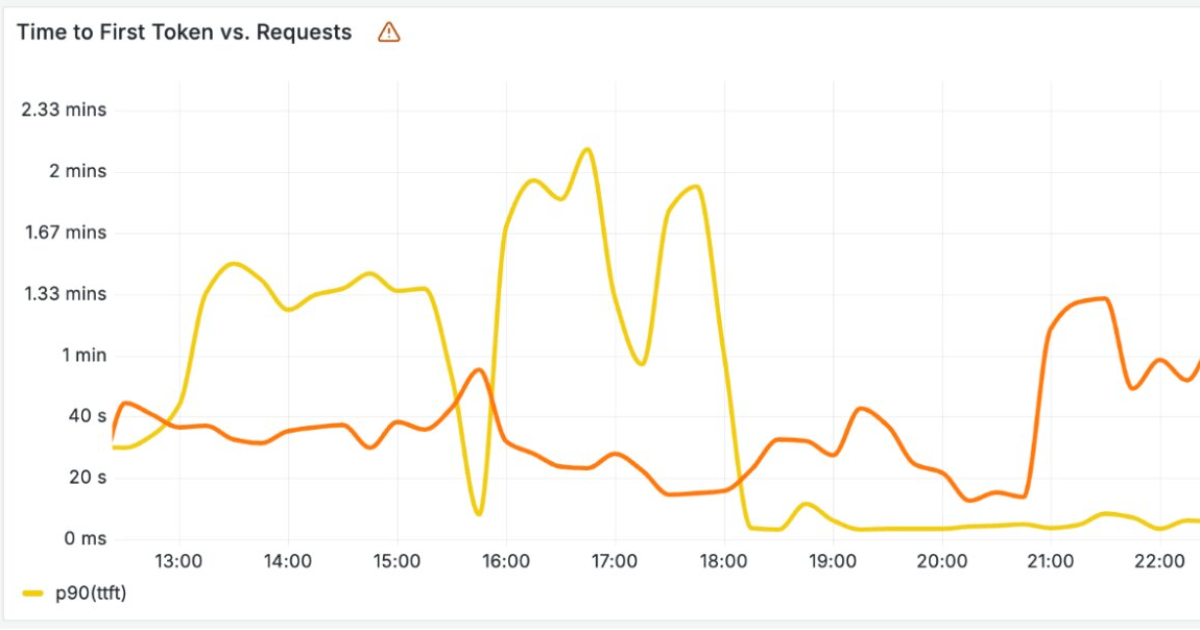

Cloudflare has recently announced new infrastructure designed to run large AI language models across its global network. As these models rely on costly hardware and must handle large volumes of incoming and outgoing text, Cloudflare separated the model's input processing and output generation onto different optimized systems.

By Renato LosioRead on the original site

Open the publisher's page for the full experience

Tagged with

#large dataset processing#natural language processing for spreadsheets#natural language processing#AI formula generation techniques#generative AI for data analysis#rows.com#Excel alternatives for data analysis#big data performance#Cloudflare#infrastructure#AI language models#large AI models#global network#input processing#output generation#optimized systems#costly hardware#text processing#incoming text#outgoing text