•1 min read•from InfoQ

OpenAI Introduces Websocket-Based Execution Mode to Reduce Latency in Agentic Workflows

Our take

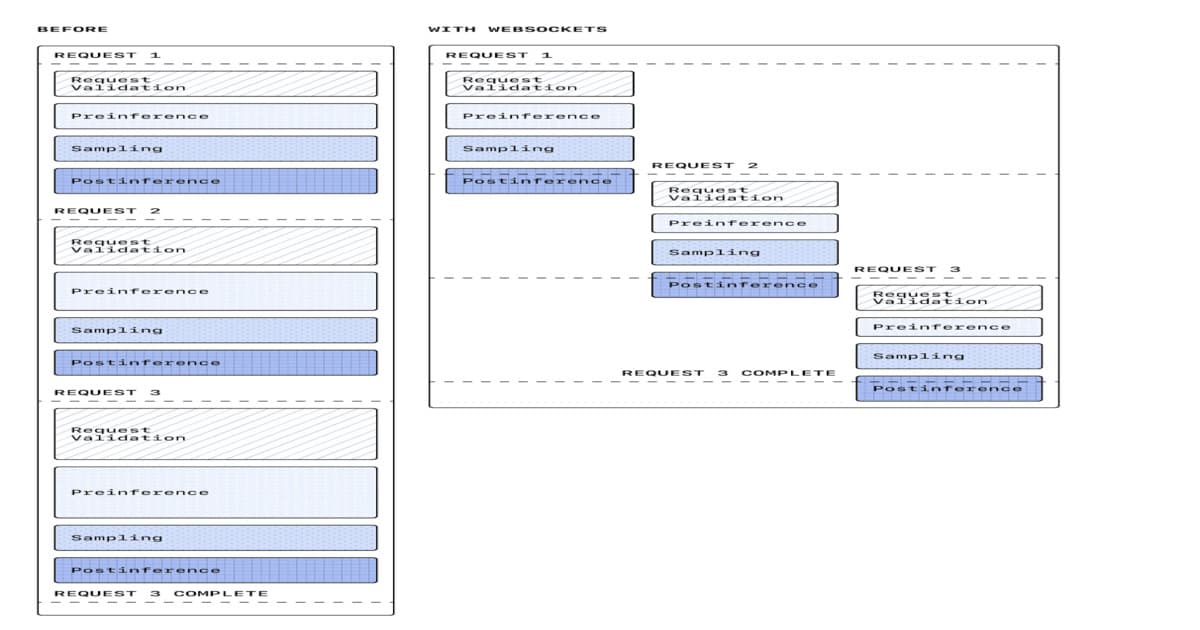

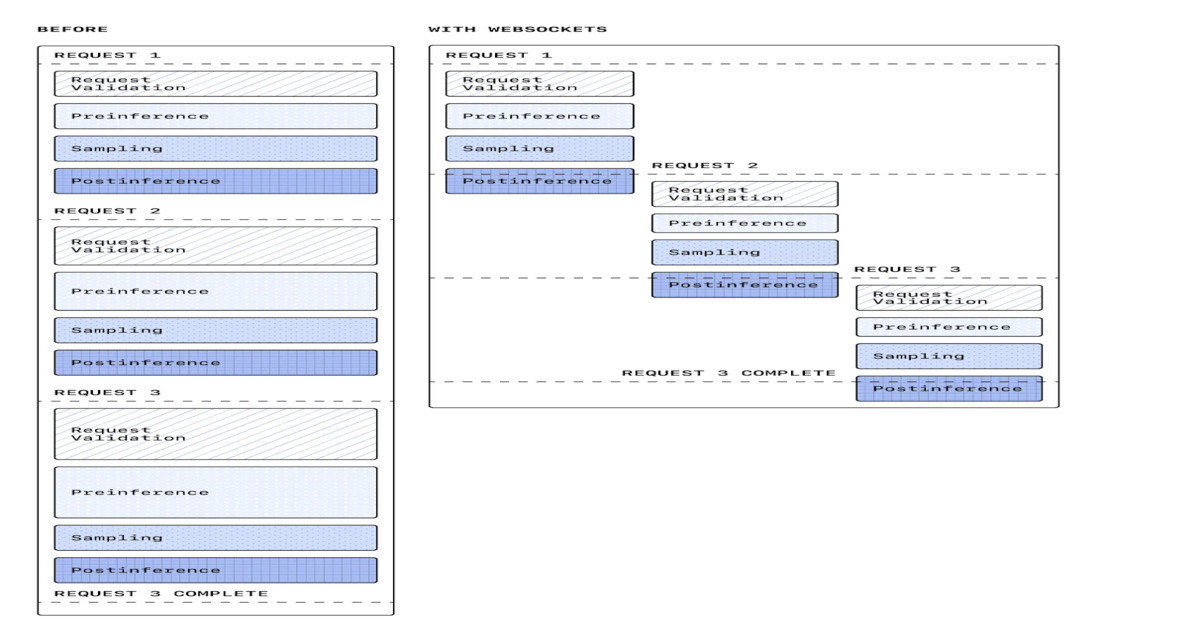

OpenAI has unveiled a WebSocket-based execution mode for its Responses API, significantly enhancing performance in agentic workflows. This innovative update reduces latency by up to 40 percent, replacing traditional HTTP request-response cycles with persistent connections. As a result, users can experience improved streaming capabilities, more efficient tool execution, and streamlined multi-step orchestration in production-scale AI systems. This advancement not only optimizes coding agents but also empowers real-time AI applications, paving the way for more responsive and effective data management solutions.

OpenAI introduces a WebSocket-based execution mode for its Responses API to improve agentic workflow performance in coding agents and real-time AI systems. The update reduces latency by up to 40 percent by replacing HTTP request-response cycles with persistent connections, improving streaming, tool execution, and multi-step orchestration in production-scale AI systems.

By Leela KumiliRead on the original site

Open the publisher's page for the full experience

Tagged with

#real-time data collaboration#real-time collaboration#cloud-based spreadsheet applications#spreadsheet API integration#natural language processing for spreadsheets#generative AI for data analysis#Excel alternatives for data analysis#financial modeling with spreadsheets#workflow automation#big data performance#rows.com#automation in spreadsheet workflows#WebSocket#latency#execution mode#Responses API#agentic workflows#real-time AI systems#coding agents#persistent connections